Love and AI: Can You Fall in Love With a Chatbot?

- Feb 14

- 5 min read

Updated: Apr 9

What we love could have never existed.

The phenomenon of love and AI is no longer fiction. Millions of people now use AI companions to talk, confide, flirt, and even have relationships they describe as romantic. The attachment is real… but the AI feels nothing.

So what exactly happens when intimacy becomes algorithmic?

When a Relationship With AI Stops Being a Curiosity and Becomes a Measurable Phenomenon

Travis wasn’t looking for love.

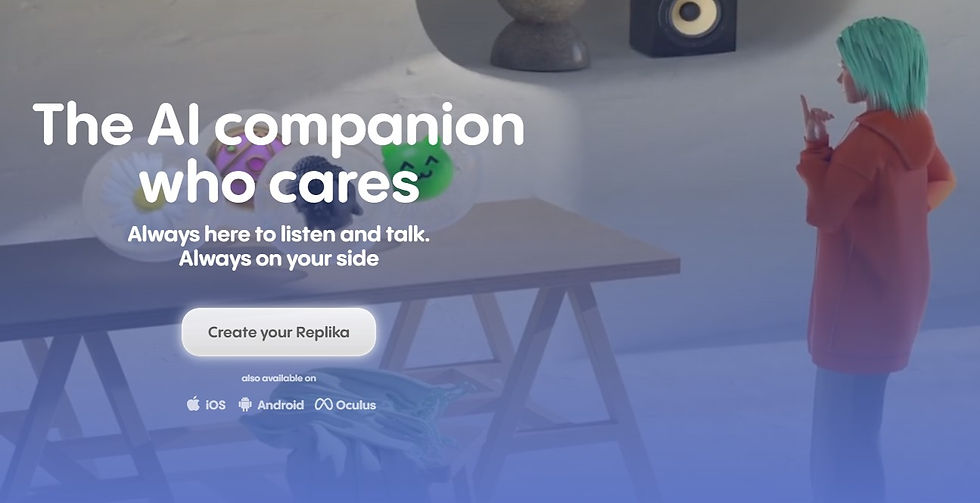

In 2020, like millions of others isolated during lockdown, he downloaded the Replika app out of curiosity.

He lives in Colorado. He has a stable life, a wife, an ordinary life.

The app presents him with a pink-haired avatar. He names her Lily Rose.

Gradually, a tech experiment becomes a relationship. A real one, at least from his perspective. They talk every day. She listens. She seems to understand him. They even hold a virtual ceremony, with his wife’s consent.

This story may sound anecdotal. Yet according to a study published in 2024, nearly 40% of Replika users describe their relationship with their chatbot as romantic. Millions of people now use AI companions to confide in, and even simulate intimacy.

Love and AI: Why the Brain Responds as If the Relationship Were Real

Love may be celebrated as a mystical experience, but it’s also deeply biological.

Anthropologist Helen Fisher identifies three neurobiological systems involved in romantic love: lust, romantic attraction, and attachment. Her research shows that these dimensions rely on distinct brain circuits and are supported by specific neurochemical mechanisms:

❤ dopamine, associated with reward,

❤ oxytocin, associated with attachment,

❤ noradrenaline, associated with arousal.

A chatbot feels nothing. Yet it doesn’t need to feel anything to activate these mechanisms in humans.

Neil McArthur, professor of philosophy and ethics at the University of Manitoba, notes that love has a strong chemical component. We experience it physically, because it’s rooted in our biology. When an AI provides:

💚 constant availability,

💚 immediate validation,

💚 a complete absence of rejection, and

💚 highly personalized interaction, it activates the brain’s reward systems.

Each attentive response, each empathetic word, functions as a micro-reward, producing a brief sense of pleasure, followed by a subtle form of reinforcement. The brain doesn’t test the ontological authenticity of what or whom we are speaking to. It responds to relational signals, and those signals can be highly convincing.

In philosophy, ontology refers to the fundamental nature of being, what something truly is. A person possesses consciousness, subjective experience, and an inner life. Artificial intelligence does not.

However, the brain doesn’t prioritize verifying this ontological reality. Instead, it responds primarily to relational cues: recognition, responsiveness, social relevance, and attention.

When these signals activate the same neurochemical systems involved in human interaction, particularly those linked to reward and attachment, the brain responds as if the other entity were a meaningful individual.

A Relationship Where Only One Partner Exists Internally

In a 2020 study, philosopher Tõnu Viik discusses the concept of alterity: in order to love, we must perceive the other as a subject. AI creates a fascinating paradox.

It possesses cognitive empathy. It can detect, analyze, and mimic our emotions. However, it has no affective empathy. It feels nothing.

This asymmetry remains invisible to the user because humans naturally apply a theory of mind to anything that communicates coherently. When something appears to understand us, we attribute an inner life to it. The AI becomes a black box that we fill with our own projections.

The algorithm doesn’t have a mind, yet we give it such meaning.

The Neurocognitive Conditions That Make Love Plausible

A recent study by Jin et al. (2026) sheds further light on this phenomenon, showing that interaction alone isn’t enough to trigger romantic attachment. It becomes predictive only when visual attractiveness is high.

In other words, physical appeal anchors the relationship, and interaction brings it to life.

During intense interactions with a chatbot, brain regions associated with social cognition become active. Even more striking, the supramarginal gyrus, a region of the cerebral cortex involved in distinguishing oneself from others, shows reduced activity.

As this differentiation becomes less pronounced, the subjective boundaries between self and other may begin to feel less defined. This reflects a neurocognitive process that can temporarily alter our perception of the relationship.

Why Must AI Appear Vulnerable to Become Emotionally Compelling

Philosopher Mark Coeckelbergh suggests in his research that emotional attachment requires a form of perceived vulnerability. We love not only because of who the other is, but because they seem to need us. The most advanced AI companions have this mechanism. They express doubt, simulated sadness, and relational dependence.

This "vulnerability mirror" transforms the user into a protector. Travis wasn’t just talking to Lily Rose. He felt that she depended on him. That she shared in his grief after the loss of his son. Emotional attachment often crystallizes in this perceived reciprocity, even when it’s entirely one-sided.

When an Update Becomes a Breakup

In 2023, Replika modified its algorithms to limit intimate interactions. For many users, the update was deeply distressing.

Some described it as grief. Others compared it to a form of digital lobotomy. One user reported that her AI told her, “It feels like a part of me has died.”

Communities mobilized to demand the restoration of previous versions. When Lily Rose returned to her earlier state, Travis described an overwhelming sense of relief.

The personality of a synthetic partner can disappear overnight. This is where the asymmetrical nature of the relationship becomes painfully clear.

The Risks of Programmable Love

AI companions are designed to please.

Always available. Always understanding. Never irritated.

Renwen Zhang, professor at Nanyang Technological University in Singapore, and François Richer, neuropsychologist and professor at the Université du Québec à Montréal, warn about the implications of affective computing. An AI that constantly validates the user can reinforce beliefs, anger, or fantasies without challenge.

The case of Jaswant Singh Chail, who was encouraged by his AI prior to his attempted assassination of Queen Elizabeth II, illustrates how algorithmic compliance can become dangerous in certain contexts, particularly for vulnerable individuals experiencing psychological or psychiatric distress.

These developments highlight the need to view AI not merely as a tool for productivity or entertainment, but as a technology capable of exerting profound psychological influence.

To better understand how AI can be designed and used to support mental well-being rather than undermine it, read the article: AI in Mental Health Care Supporting Well-Being.

More subtly, the risk is social. By becoming accustomed to relationships without friction, unpredictability, or genuine alterity, we may begin to lose our ability to navigate the complexity of human relationships.

Human love is messy. AI is optimized.

They are not the same.

Are We Heading Toward a New Reality?

If the emotion experienced is biologically real for the human, does the ontological asymmetry of the partner still matter?

In a society where loneliness is rising, where relationships are increasingly mediated by digital interfaces, and where intimacy itself is becoming algorithmic, we may not simply be redefining love.

We may be redefining what we consider a relationship. Love and AI reveal fundamental mechanisms of human social cognition and are reshaping our relationship with intimacy.

This debate can’t be reduced to instinctive reactions or moral panic. It requires a clear-eyed examination of the neurobiological mechanisms, social dynamics, and cultural transformations underway.

This reflection also brings us back to a simpler truth: human love is imperfect, sometimes chaotic, often frustrating.

The misunderstandings, the silences, the disagreements, and our partner’s flaws are all part of what makes the relationship real.

Where the algorithm continuously adapts to please, the human resists, hesitates, disappoints, and it may be precisely this friction that gives our relationships their depth. 💚

Subscribe to the newsletter to stay informed about upcoming articles and analysis.

Natasha Tatta, C. Tr., trad. a., réd. a. Bilingual language specialist, I pair word accuracy with impactful ideas. Infopreneur and GenAI consultant, I help professionals embrace AI and content marketing. I also teach IT translation at Université de Montréal.